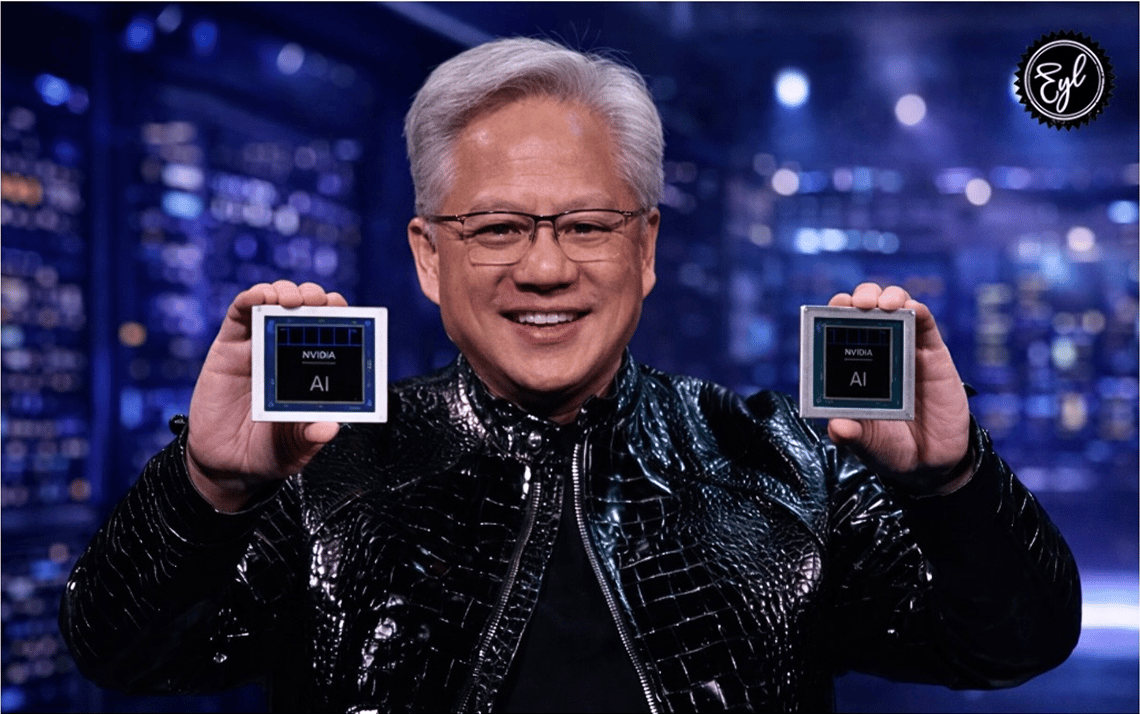

Jensen Huang's Monday keynote at GTC 2026 ran two hours and covered a product roadmap that, if it executes on schedule, will make the past three years of AI infrastructure investment look like a warm-up.

Vera Rubin and the NVL72 Supercomputer

The headliner was Vera Rubin — Nvidia's next-generation GPU and CPU platform, designed to succeed the Blackwell architecture that has been shipping at record volume since late 2024. Vera Rubin pairs Nvidia's new Vera CPU, optimized for agentic AI workloads, with Rubin GPU arrays built around fourth-generation high-bandwidth memory and a fabric interconnect that dramatically increases the effective memory bandwidth available to AI models running inference at scale.

The NVL72 Supercomputer is the clearest statement of what Nvidia intends this platform to mean. Built from 18 DGX Rubin NVL72 racks containing 1,296 GPUs and 640 terabytes of HBM4 memory, the system delivers 3.6 exaflops of inference performance and 700 petaflops of training performance in a single data center deployment. At that scale, the NVL72 is not a research tool — it is the infrastructure on which production AI systems at Anthropic, OpenAI, Meta, and their equivalents will run in 2026 and 2027.

The $1 Trillion Math

Huang's $1 trillion cumulative revenue projection, covering Blackwell and Rubin architectures through 2027, is the number investors took away from the conference. To be precise about the math: $1 trillion over three years, from a company that posted roughly $130 billion in revenue in fiscal 2025, implies that Nvidia's revenue base doubles again before 2027. The company generated that kind of growth from 2023 to 2025 on the back of the first AI infrastructure buildout. The projection is betting the second wave — inference, agentic AI, and physical AI — is as large as the first.

Groq-3, Space-1, and the Third Vertical

Also announced at GTC was the Groq-3 LPU, Nvidia's latest large-scale processor architecture designed for inference rather than training. LPUs process sequential token generation faster than traditional GPU architectures and with meaningfully lower energy consumption per token. For data center operators running inference at the billions-of-requests-per-day scale that OpenAI, Google, and Anthropic are approaching, the energy economics matter substantially.

The Space-1 module, a compact AI inference system designed for satellite and orbital deployment, was among the more unexpected announcements. It signals that Nvidia is treating physical AI — AI running on devices and systems in the physical world — as the third major revenue vertical after training and data center inference.

China and the Global Infrastructure Play

The China H200 approval, which landed during GTC rather than before it, was the event the market had been hoping to see. With China back in scope, the $1 trillion projection becomes more mathematically defensible. China's major AI cloud providers — Alibaba, Baidu, ByteDance, Tencent — have been running infrastructure buildouts at capital intensity levels comparable to U.S. hyperscalers. More than 30,000 attendees from 190 countries attended GTC 2026, and the Samsung and SK Hynix presence on stage as memory partners reflects the degree to which Nvidia has become the organizing infrastructure layer for the global AI buildout. Friday's analyst session will be the first opportunity to get formal guidance figures — watch Vera Rubin shipment timing and any China H200 revenue range closely.