KEY POINTS

- Google debuted its eighth-generation TPU 8t (training) and TPU 8i (inference) at Cloud Next 2026, with TPU 8t delivering three times the processing power of the Ironwood generation and 2.8x better price-to-performance.

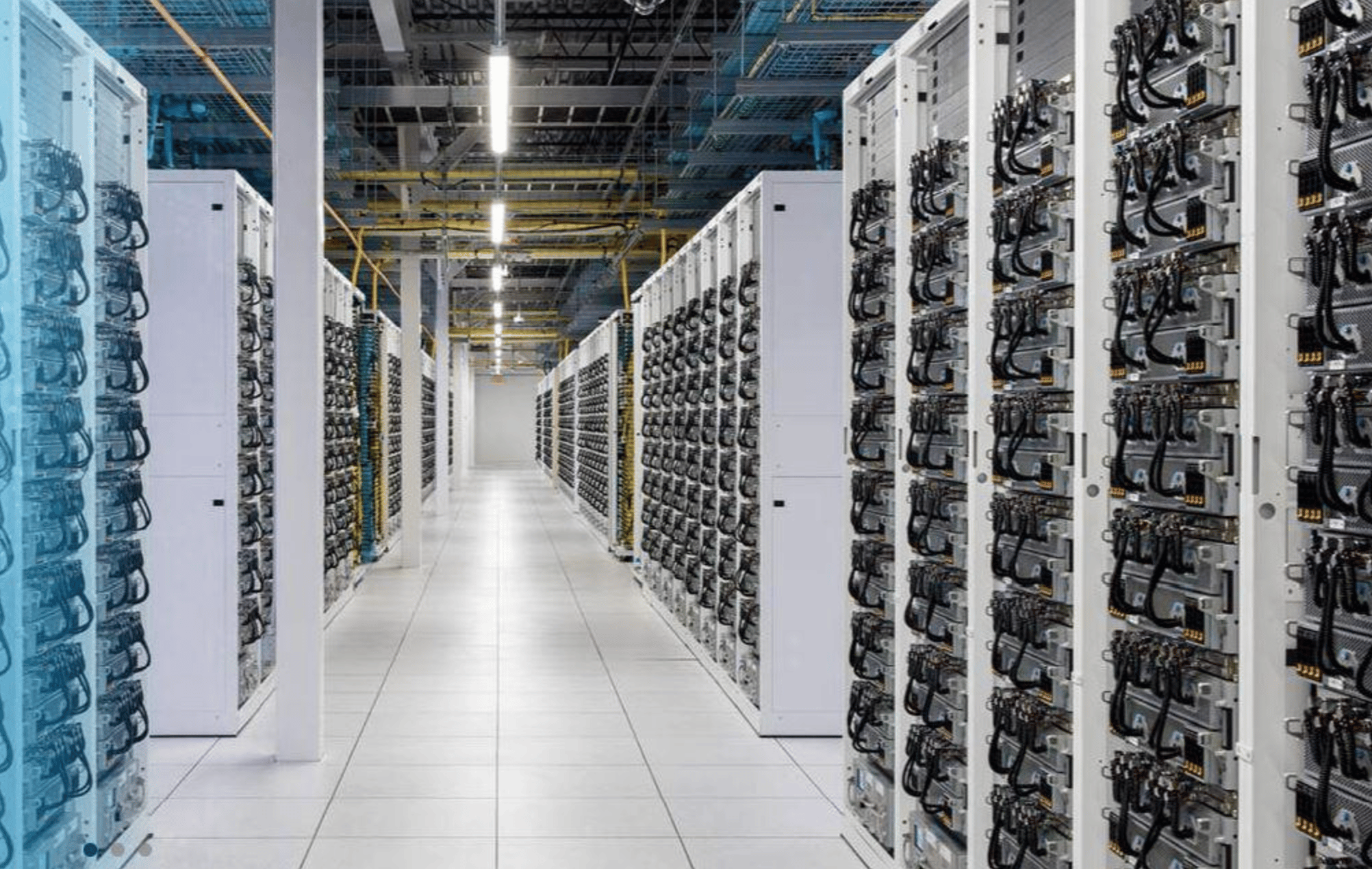

- TPU 8t scales to 9,600 chips and two petabytes of shared high-bandwidth memory per superpod — a silicon and networking configuration designed explicitly to take frontier model training inside Google Cloud.

- Traders should watch whether GOOGL's cloud backlog commentary on the July 24 earnings call reflects TPU-driven demand displacement from Nvidia-dominant hyperscalers.

Google unveiled its eighth-generation TPU 8t and TPU 8i chips at Cloud Next 2026 in Las Vegas this week, with the training-optimized TPU 8t scaling to 9,600 chips in a single superpod and delivering three times the processing power of the prior-generation Ironwood silicon, along with roughly 2.8x better price-to-performance. Shares of Alphabet jumped on the announcement and have held the gain into Friday. The company framed the launch around agentic AI, but the real message to the market was more direct: Google now has an end-to-end training-and-inference silicon stack that no longer needs Nvidia to function at frontier scale.

The Training and Inference Split

The TPU 8t is the chip Google will use, and sell, for training. According to Google's own technical brief, a single TPU 8t superpod connects 9,600 chips with two petabytes of shared high-bandwidth memory and delivers 2x more performance per watt than the prior generation. That last number is the one to dwell on. Power-per-watt is the binding constraint on hyperscale AI buildouts — not silicon cost, not network fabric, but grid-level electricity delivery. Every point of efficiency gets compounded across millions of training hours.

The TPU 8i handles inference, and the architecture reflects a different priority: latency over raw throughput. It connects 1,152 TPUs per pod with 3x more on-chip SRAM than the prior generation, designed to concurrently run millions of agents with tight response-time budgets. The inference story matters because the unit economics of agentic AI depend on being able to run a model cheaply, repeatedly, and fast. If Google's in-house silicon lets it serve inference at materially lower cost than a GPU-based competitor, that shows up directly in Google Cloud's operating margin and in the pricing of Gemini-backed products.

What This Means for the Nvidia Trade

Nvidia remains the dominant AI silicon story and the new Rubin platform will be the reference architecture for most of the industry through 2027. But the TPU 8 announcement narrows the gap in one specific, important way. Before this week, the argument for paying Nvidia's premium was that no one else had a silicon stack that could do both training and inference at frontier scale. Google now does. The company is positioning TPU capacity as an alternative inside Google Cloud, which means enterprises running large training jobs have a credible second option for the first time.

That is not an existential threat to Nvidia. Demand for Rubin is already exceeding supply through 2027 based on pre-order commentary from hyperscaler capex plans. But it is a ceiling on pricing power. A buyer with a real alternative negotiates differently than a buyer without one. The other read-through is to Nvidia's own partnership announcement with Google, which effectively acknowledged the co-existence model: Google will offer both TPU and Nvidia-based instances to enterprise customers, and compete on workload fit rather than silicon lock-in.

The Cloud Backlog Question

The real financial impact of TPU 8 will show up in Google Cloud's revenue and backlog numbers. Last quarter, Google Cloud reported revenue of $14.6 billion and operating margin above 20%, with remaining performance obligations — the deferred-revenue bucket that captures signed-but-unrecognized contracts — at record levels. If TPU 8 accelerates enterprise adoption of Google Cloud for AI training, the RPO number on the July 24 earnings call becomes the most important disclosure of the quarter. A step-function increase signals real displacement from Nvidia-first competitors. A flat number suggests this is a margin story, not a share-shift story.

GOOGL closed Thursday at $178.55, up roughly 3% on the TPU announcement. The stock has outperformed the Nasdaq-100 by about 400 basis points year to date. Options markets are pricing a 5% move on the July earnings print, which is modest given the narrative stakes. The forward multiple remains around 22x 2026 earnings, well below Microsoft and Amazon's cloud-adjacent multiples. That is the bull case in one number.

The Forward Look

The next catalyst is the general availability rollout of TPU 8t and TPU 8i, which Google said will land later this year. Enterprise AI buyers will not shift workloads until they can benchmark against live silicon. The second catalyst is Nvidia's response — whether the company accelerates its Rubin cadence, cuts pricing on Blackwell Ultra to defend share, or leans harder into its CUDA software moat. Watch Nvidia's GTC fall event and any mid-cycle pricing commentary from AI-focused hyperscalers for signals. Above $185, GOOGL enters a fresh leg of its AI re-rating. Below $170, the market is saying the TPU story is priced in.